Research

Selected Talks

2026:• CJCMA 2026 Congrès des Jeunes Chercheur•es en Mathématiques Appliquées. Conference for Young Applied Maths scientists. 2-4 March, Champs-sur-Marne.

2025:

• 4ème Journée d'études de l'ARCOM - Presentation of research on audiovisual and digital media. 13 November, Paris. Video available here.

• CSS 2025 International Conference on Complex Systems, 1-5 September, Siena, Italy.

• frCSS 2025 French Local Chapter - Conference on Complex Systems/Sunbelt conference, 25-29 June, Paris.

• Séminaire Systèmes complexes en Sciences Sociales Journée d'étude sur la diffusion d'opinions, CAMS, EHESS. 4 April, Paris.

Publications

• Beyond ReLU: Bifurcation, Oversmoothing, and Topological Priors, accepted at ICML 2026 (spotlight). Erkan Turan, Gaspard Abel, Maysam Behmanesh, Emery Pierson, Maks Ovsjanikov.

• Uncovering Social Network Activity Using Joint User and Topic Interaction, accepted in IEEE Transactions On Computational Social Systems, 2026. Gaspard Abel, Argyris Kalogeratos, Jean-Pierre Nadal, Julien Randon Furling.

• Reducing Recurrent Competitive Epidemics via Dynamic Resource Allocation, submitted to IEEE Transactions on Network Science and Engineering, 2026. Argyris Kalogeratos, Gaspard Abel, Stefano Sarao Mannelli.

• Dual-Criterion Curriculum Learning: Application to Temporal Data, submitted to ECML PKKD 2026 Gaspard Abel, Eloi Campagne, Mohamed Benloughmari, Argyris Kalogeratos.

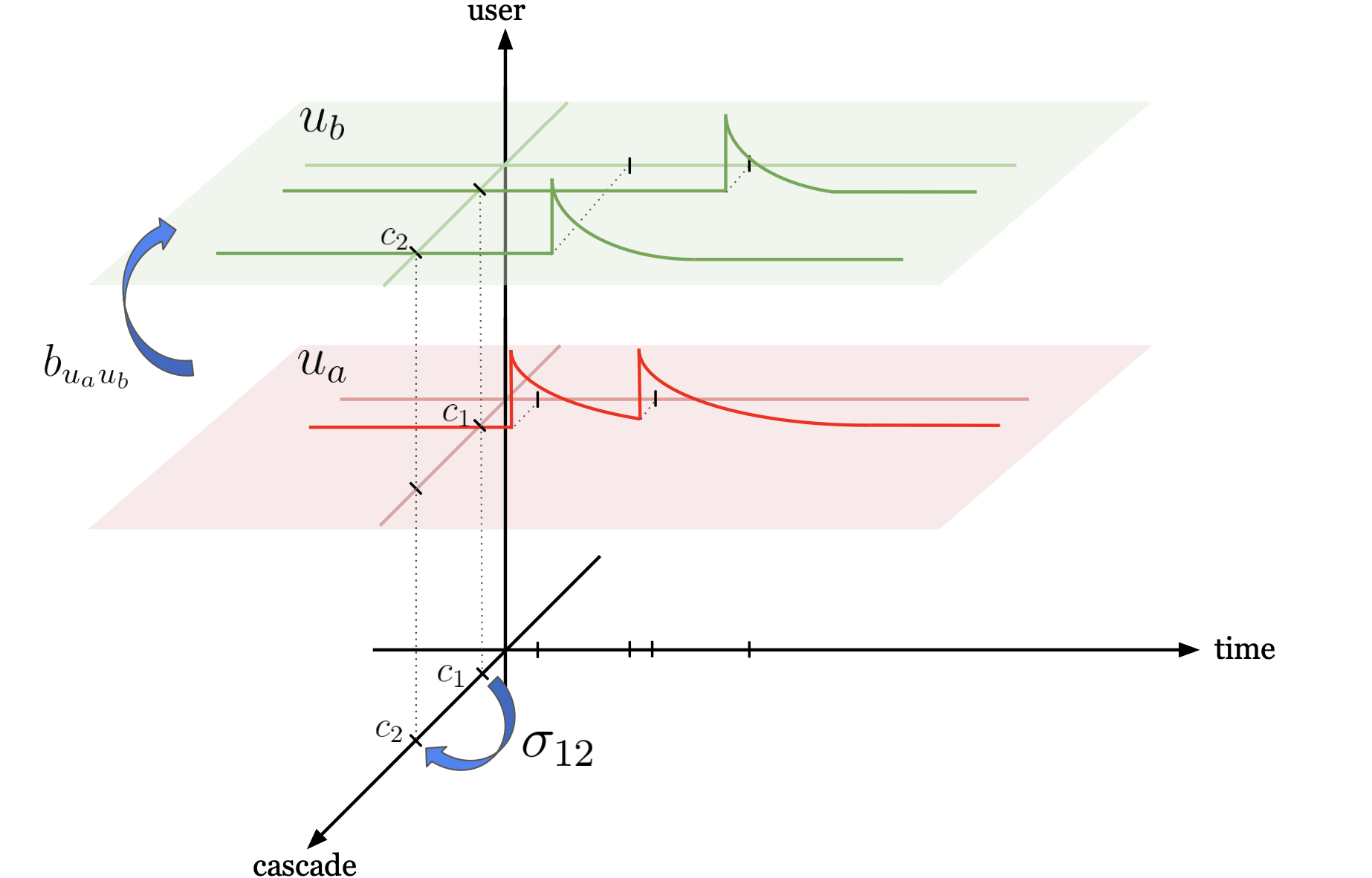

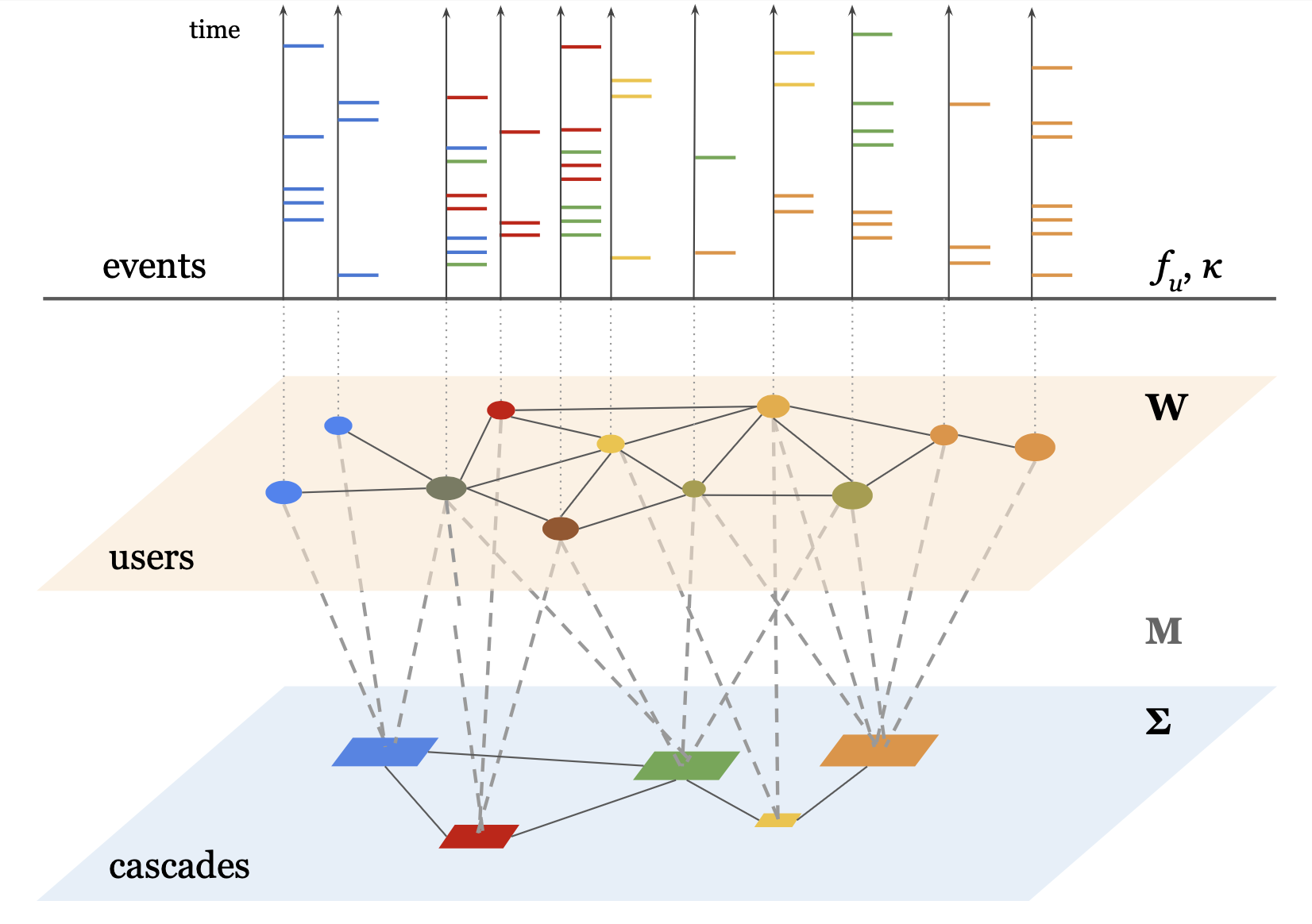

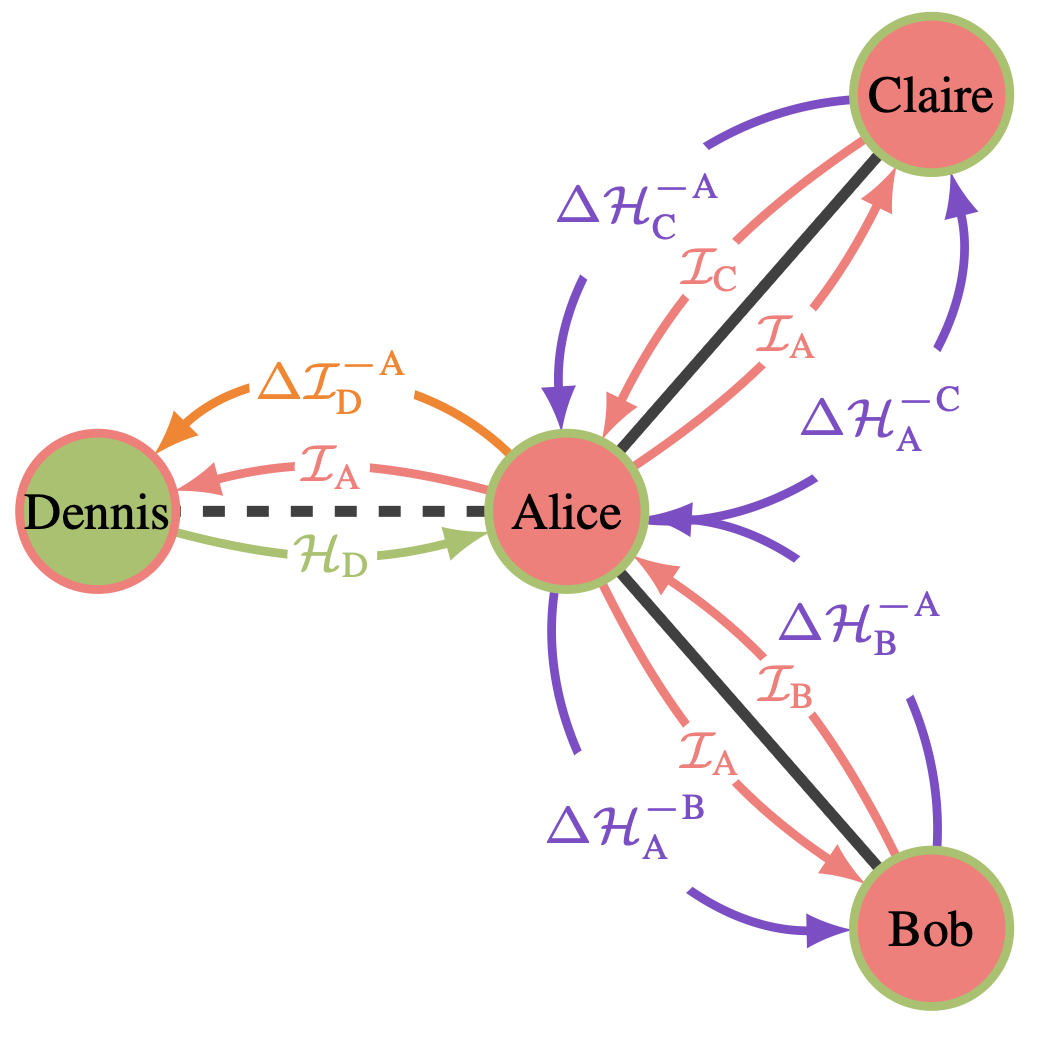

Temporal Point Processes for information diffusion in social networks

This project focuses on the interplay between the dynamics of information diffusion in social networks and the content of the information spread. To model this, we use Multidimensional Marked Hawkes Processes, which are a class of Temporal Point Processes.

The article can be found here: Uncovering Social Network Activity Using Joint User and Topic Interaction, IEEE Transactions on Computational Social Systems, 2026.

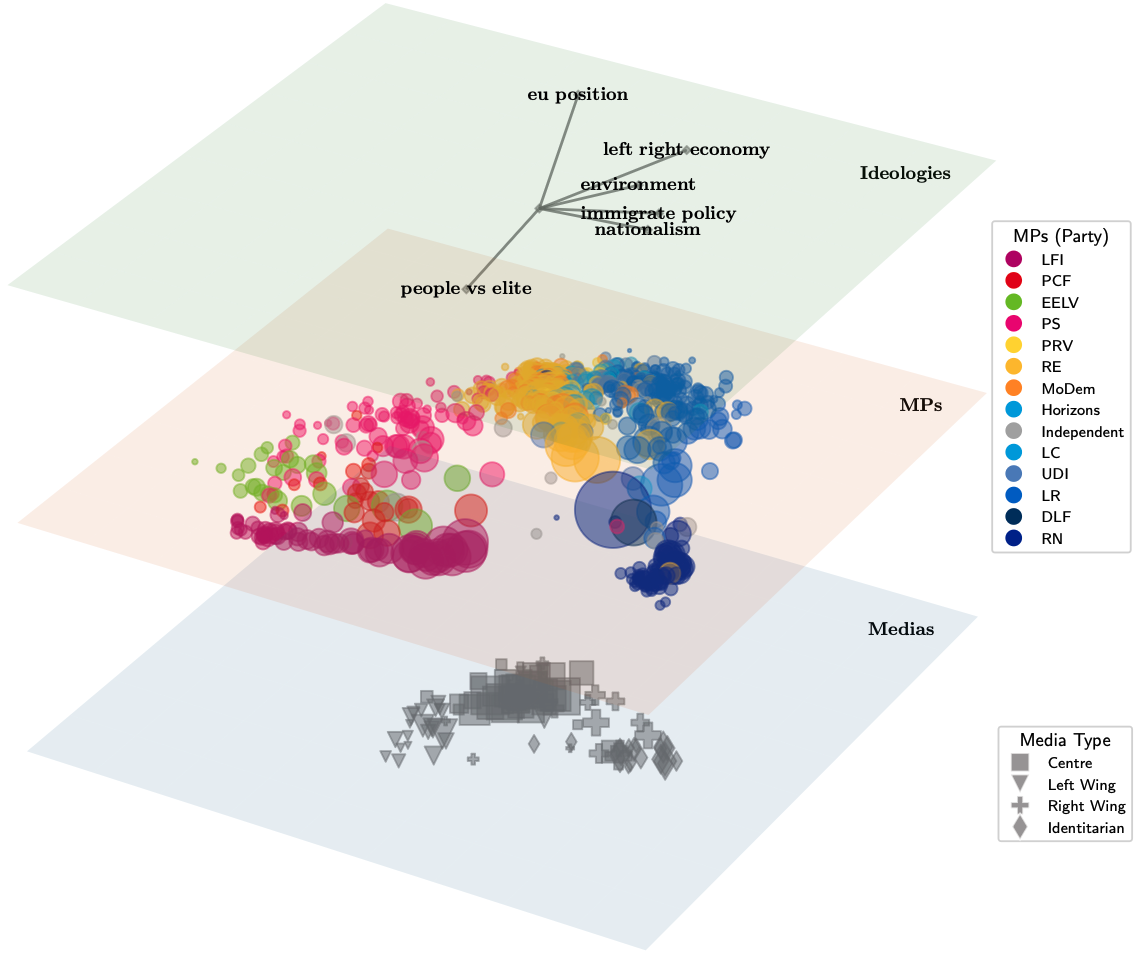

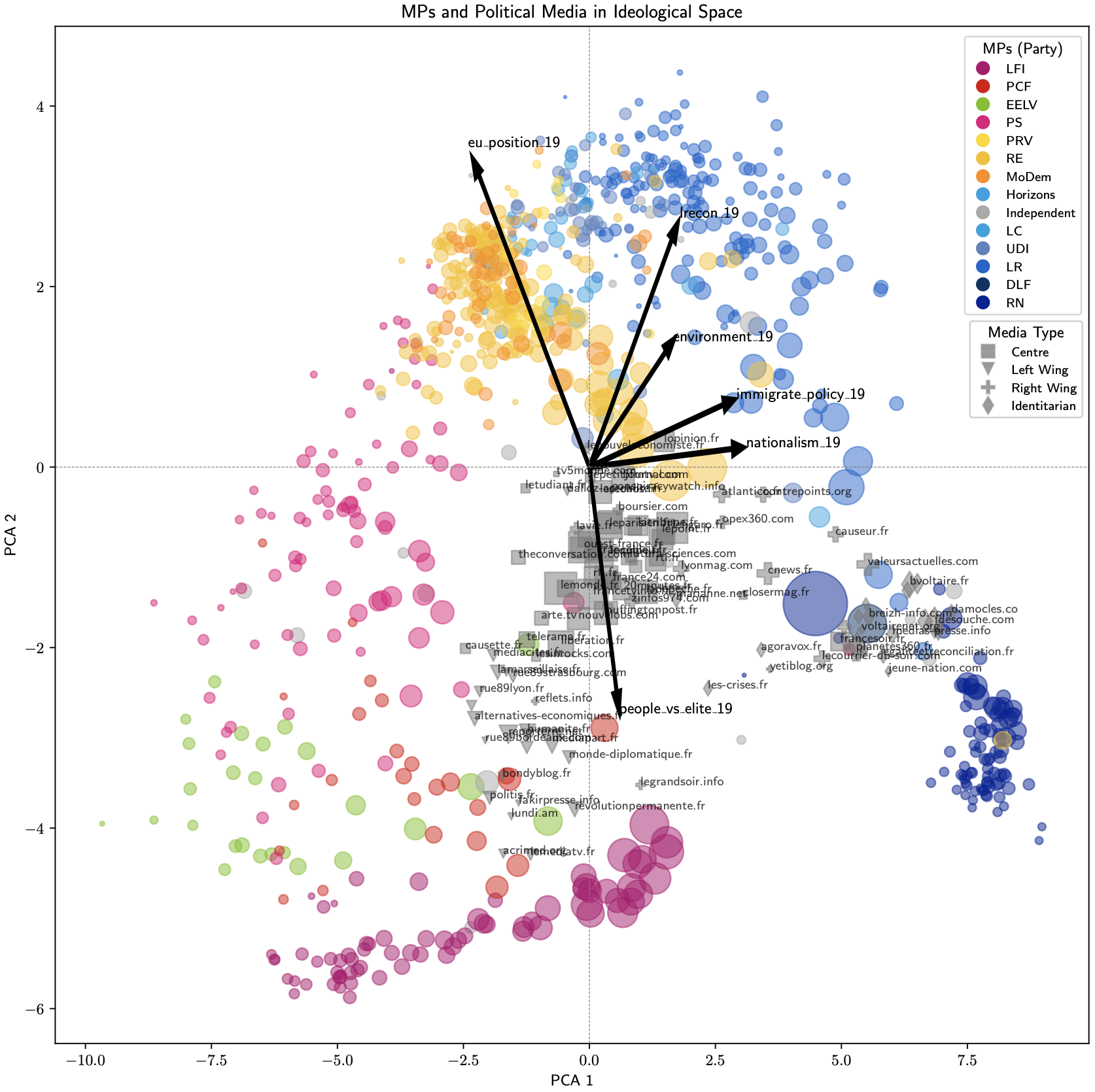

A visualization tool for multilayer networks

MultilayerNetViz is a lightweight python module for the visualization of complex networks. It allows to represent the interactions within and between different layers of the network. This is especially useful for the study of socio-semantic networks. The package and documentation can be found on this github repo: MultiLayerNetViz

Visualization examples:

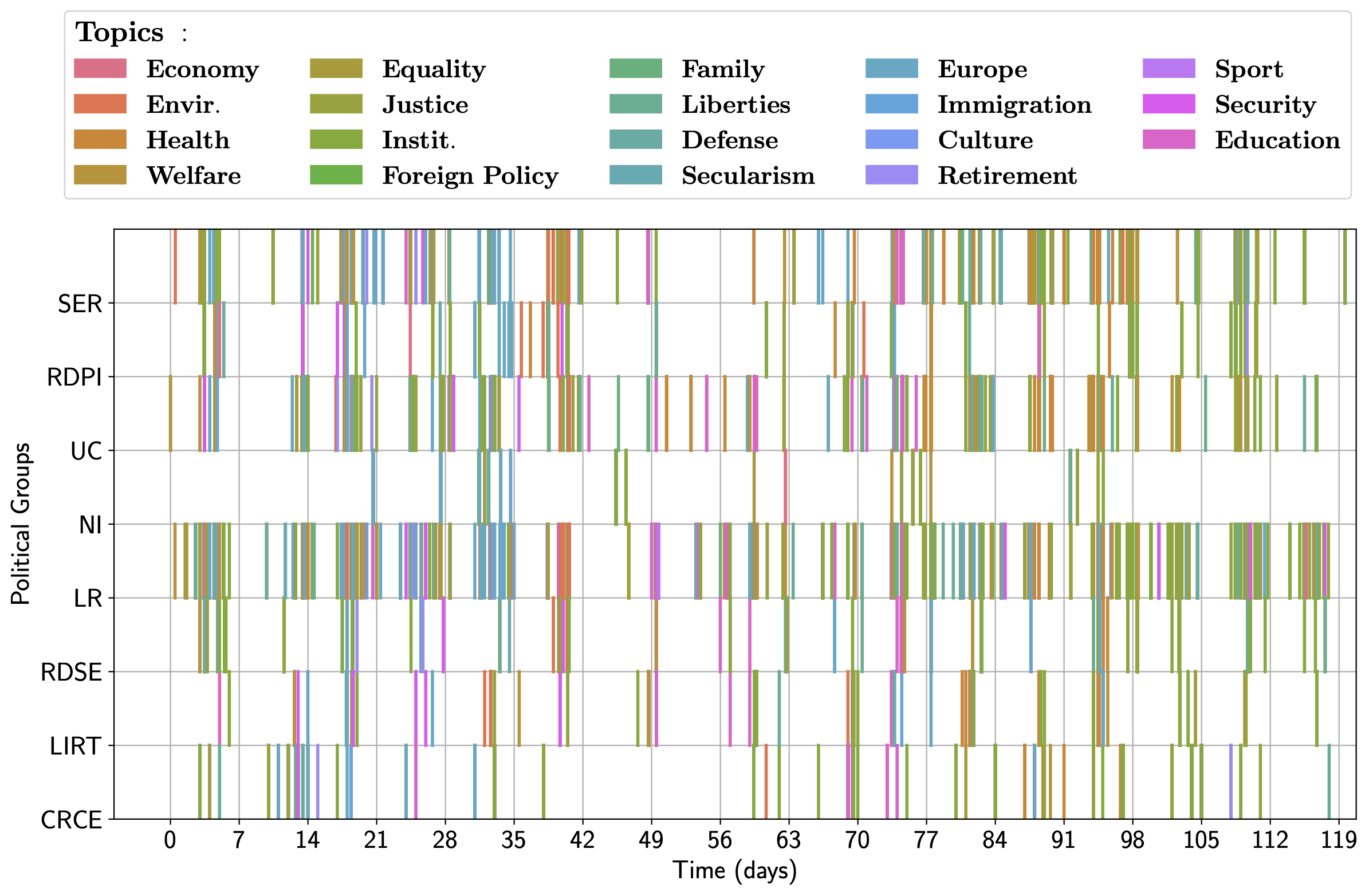

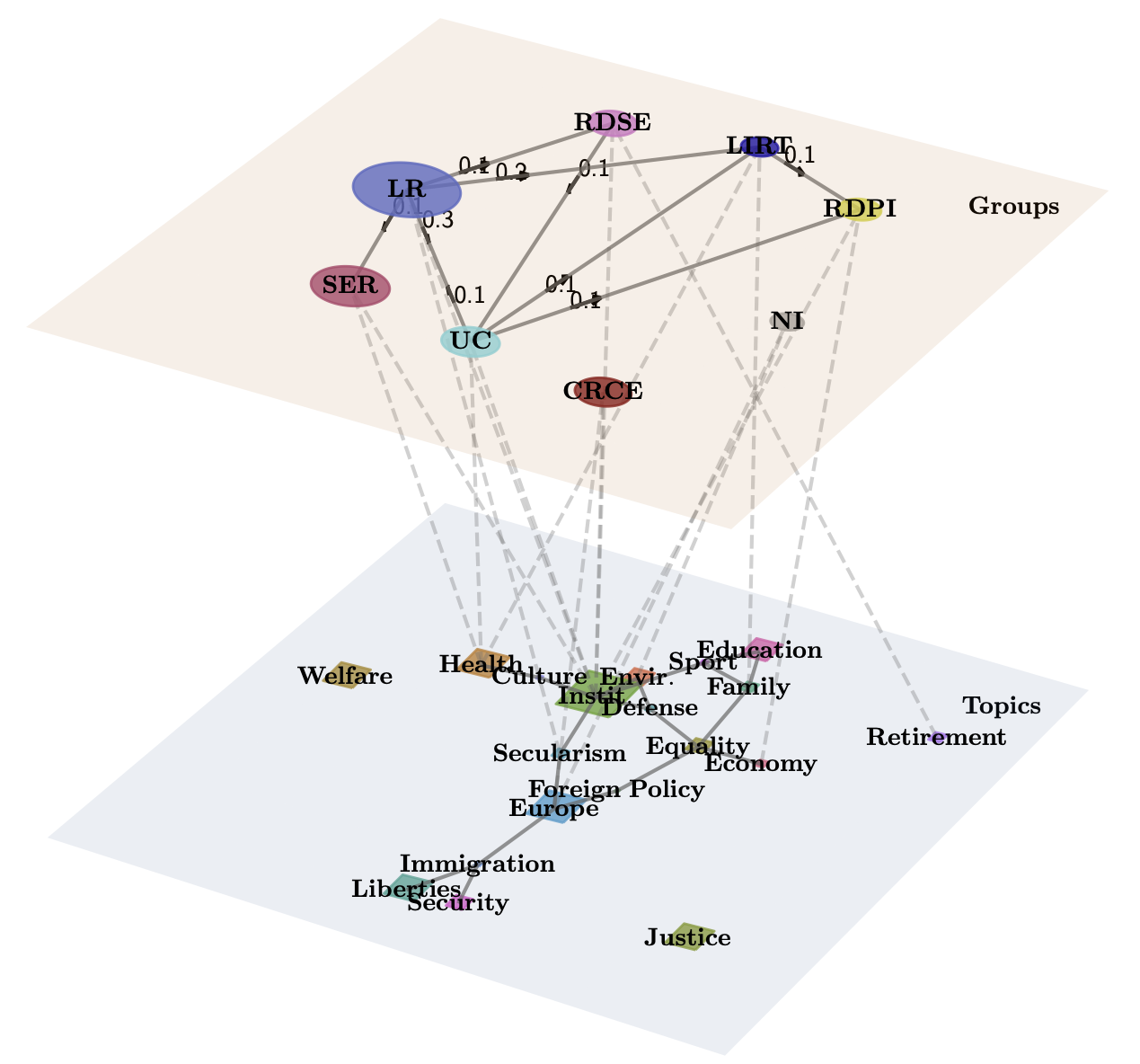

The legislative process of the French Senate is characterized by a complex interplay of various factors, including political agendas, public opinion, and institutional dynamics. As seen on the occurrence of proposed amendments over time, there is a strong temporal clustering of legislative activities, across every political group. After training the Mixture of Interacting Cascades model on this data, the inferred parameters can be exploited to qualitatively describe information diffusion landscape. A rich structure of interactions between different political actors can be observed, as well as on the topic of bills proposed.

Another example of visualization using the Twitter activity of (ex-)Members of the French Parliament (MPs) and web domains (Media Outlets) [Vendeville, 2025]. The dataset collects political positions of both MPs and Media Outlets, calculated using CHES (Chapel Hill Expert Survey) [Jolly, 2022]. This survey evaluates the political position of agents in a multidimensional ideological space (eu position, l/r economy, nationalism, environment,...) To visualize the data, the ideological positions of the users are represented using PCA and the projection of these axes in the PCA. The network is then represented as a multilayer network, with one layer for the ideologies projected in the PCA, one for the MPs and another for the Media Outlets.

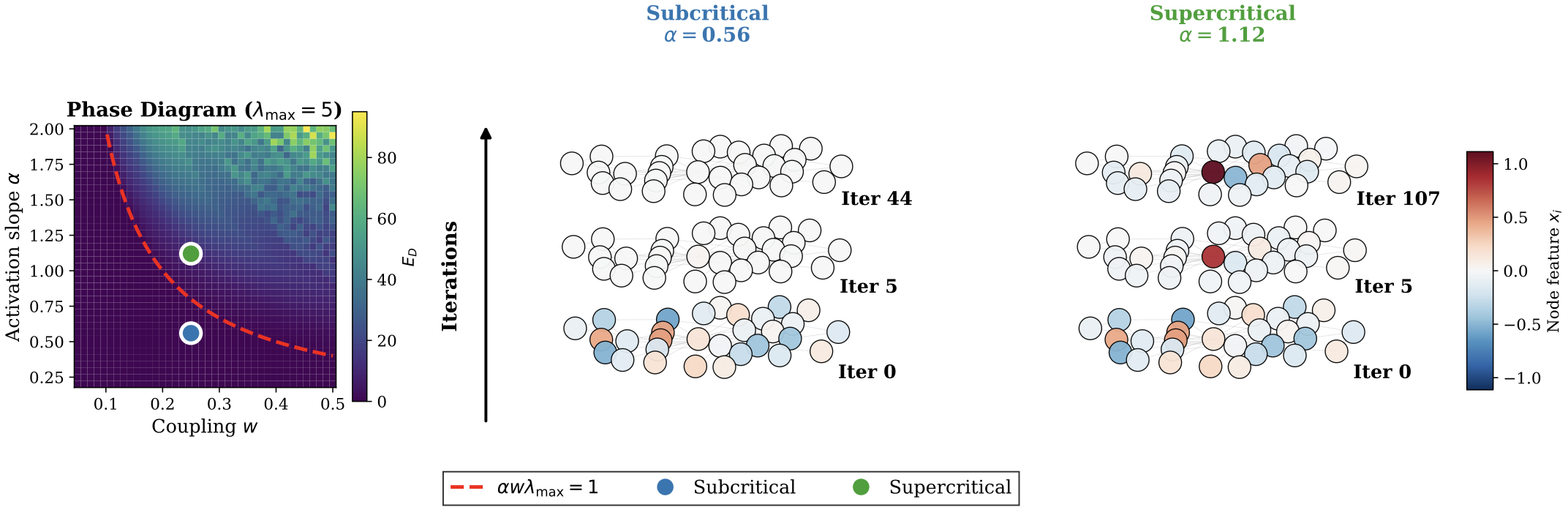

A dynamical system approach to oversmoothing in GNNs

This project focuses on the phenomenon of oversmoothing in Graph Neural Networks (GNNs), which occurs when the representations of nodes in a graph become indistinguishable after multiple layers of message passing.

Graph Neural Networks (GNNs) learn node representations through iterative network-based message-passing. While powerful, deep GNNs suffer from oversmoothing, where node features converge to a homogeneous, non-informative state. We re-frame this problem of representational collapse from a bifurcation theory perspective, characterizing oversmoothing as convergence to a stable ``homogeneous fixed point.'' Our central contribution is the theoretical discovery that this undesired stability can be broken by replacing standard monotone activations (e.g., ReLU) with a class of functions. Using Lyapunov-Schmidt reduction, we analytically prove that this substitution induces a bifurcation that destabilizes the homogeneous state and creates a new pair of stable, non-homogeneous patterns that provably resist oversmoothing. Our theory predicts a precise, nontrivial scaling law for the amplitude of these emergent patterns, which we quantitatively validate in experiments. Finally, we demonstrate the practical utility of our theory by deriving a closed-form, bifurcation-aware initialization and showing its utility in real benchmark experiments.

The article can be found here: Beyond ReLU: Bifurcation, Oversmoothing, and Topological Priors, accepted at ICML 2026 (spotlight).

Dynamic Resource Allocation for Recurrent Epidemics

This project proposes a greedy algorithm for dynamic resource allocation for recurrent and competitive epidemics.

Motivated by scenarios of epidemic competition, as well as how social contagions spread at the level of individuals, this work considers the competition between two conflicting node states that spread over a social graph according to a generic diffusion process. For this setting, we introduce the Generalized Largest Reduction in Infectious Edges (gLRIE), which is a dynamic resource allocation strategy that favors the preferred state against the other. Our analysis assumes a generic continuous-time SIS-like (Susceptible-Infectious-Susceptible) diffusion model that allows for: arbitrary node transition rate functions for nodes to change state, and competition between the healthy (positive) and infected (negative) states, which are both diffusive at the same time, yet mutually exclusive at each node. The strategy follows a minimum-risk-maximum-gain principle, and its features are particularly relevant for social contagion phenomena. In accordance with the LRIE strategy that we generalize, we show that in this context the gLRIE strategy remains a greedy solution for the minimization of the number of infected network nodes over time. Ultimately, simulations are employed to compare the proposed strategy with other existing alternatives, demonstrating that gLRIE exhibits superior performance across a spectrum of scenarios, including a realistic counter-contagion campaign in a small well-monitored community.

The article can be found here: Reducing Recurrent Competitive Epidemics via Dynamic Resource Allocation (submitted to IEEE Transactions on Network Science and Engineering).

Density and Loss-based Curriculum Learning for Temporal Data Analysis

This project investigates how density and loss-based strategies can provide complementary criterion for difficulty assessment in Curriculum Learning. We propose a modular framework to study this and evaluate on a time-series forecasting task.

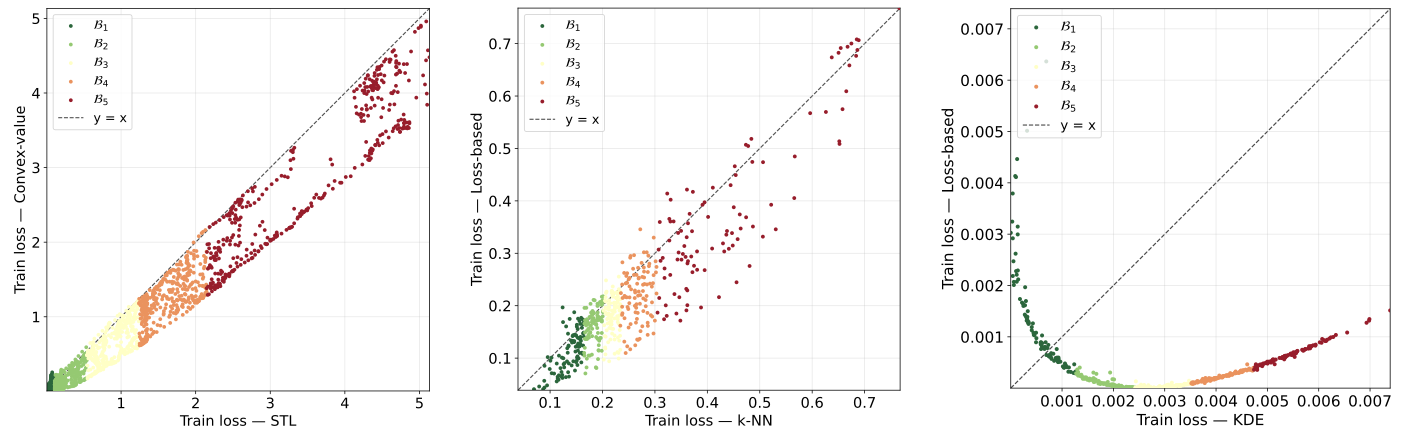

Curriculum Learning (CL) is a meta-learning paradigm that trains a model by feeding the data instances incrementally according to a schedule, which is based on difficulty progression. Defining meaningful difficulty assessment measures is crucial and most usually the main bottleneck for effective learning, while also in many cases the employed heuristics are only application-specific. In this work, we propose the Dual-Criterion Curriculum Learning (DCCL) framework that combines two views of assessing instance-wise difficulty: a loss-based criterion is complemented by a density-based criterion learned in the data repre- sentation space. Essentially, DCCL calibrates training-based evidence (loss) under the consideration that data sparseness amplifies the learning difficulty. As a testbed, we choose the time-series forecasting task. We evaluate our framework on multivariate time-series benchmarks under standard One-Pass and Baby-Steps training schedules. Empirical results show the interest of density-based and hybrid dual-criterion curricula over loss-only baselines and standard non-CL training in this setting.

The draft of this article can be found here Dual-Criterion Curriculum Learning: Application to Temporal Data.